How Dog Training Evidence Is Evaluated

Compound evidence detail1 SCR / 2 parts

- Documentedthe structural absence of an industry-wide standardized canine behavioral outcome measurement and reporting system, supported by Hsu and Serpell 2003 (C-BARQ instrument), Lamb 2018 (LAIR clinician-overestimate finding), Mills 2020 (LCAS validation), Wright 2012 (DIAS validation), Daniels 2022 (UK client-rated equivalence regardless of method), and the IAABC Foundation Journal 2023 review of informal-assessment dependence

- Heuristicthe JB framing comparing the dog-training industry's outcome-measurement vacuum to adjacent helping professions (veterinary medicine, human psychology, education) where outcome measurement is regulatorily mandated or conventionally standardized, with the qualifier that the structural claim concerns industry-scale practice and does not deny that individual trainers measure outcomes in their own work

Reading dog-training evidence well means learning to ask a different question from the one most marketing asks. Marketing asks, "Did this method look successful?" Evidence asks, "What kind of study produced that impression, what was actually measured, how many dogs were involved, how long were they followed, and what else could explain the result?" In a field as young and methodologically uneven as dog training, those questions are not academic extras. They are the difference between disciplined reading and wishful thinking. Documented

The notebooks behind this dispatch emphasize that the same finding can carry very different weight depending on design. A randomized or controlled trial counts differently from a retrospective owner survey. A systematic review counts differently from a single dramatic case report. A direct behavioral measure counts differently from an owner's satisfaction rating. The dog-training world often flattens those distinctions because it wants answers fast. Families deserve the distinctions kept intact.

Several familiar papers help show why. Ziv's 2017 review matters because it synthesizes multiple aversive-training studies rather than relying on one. Documented Vieira de Castro's 2020 paper matters because it combines observed stress behavior, cortisol, and cognitive-bias testing rather than relying only on owner impressions. Casey 2014 matters because its sample is large, even though its survey design leaves reverse causation unresolved. Cooper 2014 and China 2020 matter because they are controlled comparisons, even though their samples are small and their protocols do not settle every variant of remote-collar use.

JB therefore treats research literacy as a form of consumer protection. A family deciding how to raise or train a Golden Retriever should know why one study changes confidence more than another, why a confident trainer can still be leaning on weak evidence, and why some of JB's own positions must remain hedged until stronger comparative data exist. Documented

What It Means

Start With the Evidence Hierarchy

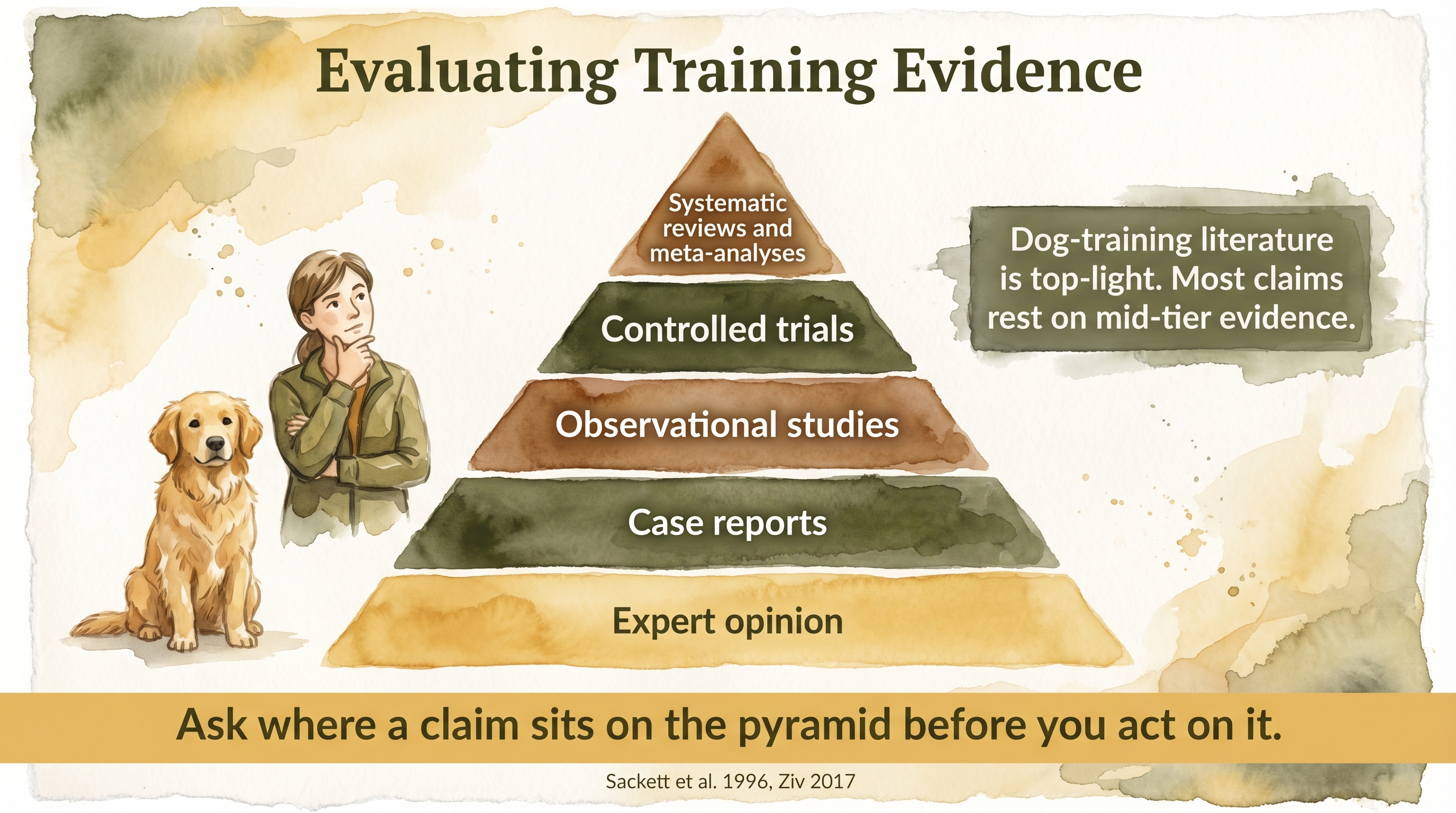

At the top of the hierarchy sit systematic reviews and meta-analyses because they gather and compare multiple studies through explicit inclusion criteria. Documented Ziv 2017 is important partly for this reason. Below reviews sit randomized or controlled trials. In dog training, these are uncommon and often ethically constrained, especially when aversive exposure is involved. Below those come cohort studies and observational school comparisons, which can show meaningful patterns while remaining vulnerable to self-selection and contextual confounds. Cross-sectional surveys sit lower because they often rely on memory, owner interpretation, and unmeasured baseline differences. Testimonials and before-and-after videos sit at the bottom because they are maximally vulnerable to selection and presentation bias.

The hierarchy is not everything. A weak systematic review is not automatically better than a strong controlled experiment. Still, it gives families a starting map. When a trainer says "the science proves," the first response should be to ask what tier of evidence is actually doing the work.

Then Ask What Was Really Measured

Dog-training studies often measure different things that are casually discussed as if they were interchangeable. Observed-JB Some measure task performance, such as recall accuracy or obedience scores. Some measure acute stress indicators, such as lip licking, yawning, body posture, or salivary cortisol. Some measure owner-reported behavior problems. A much smaller number look at cognitive bias, attachment behavior, or longer follow-up.

That distinction matters because a method can perform well on one metric and poorly on another. Remote-collar protocols can suppress behavior quickly while still producing higher stress indicators. Reward-based methods can have a cleaner welfare profile while still showing weaker transfer in a poorly managed household. Families who do not ask what the outcome variable was are at constant risk of confusing "effective at this narrow task" with "best for the dog overall."

Bias Is Not a Side Issue Here

The notebooks repeatedly identify the direction-of-effect problem as a field-wide constraint. Owners choose methods. They are not usually assigned to them randomly. That means owners using reward-based methods may differ in patience, education, philosophy, available time, or willingness to practice. Owners reaching for aversive methods may already be handling harder cases, be under greater stress, or have dogs with more severe baseline problems. Documented Those differences can shape outcomes independently of the method itself.

Measurement bias matters too. Lamb et al. 2018 showed that professionals can overestimate outcome success relative to validated measures. Owner surveys bring recall bias, confirmation bias, and social-desirability bias. Publication bias is also plausible: dramatic welfare harms or strong positive claims are more likely to get noticed than flat null results. Funding bias, while less prominent than in pharmaceutical research, still matters because many dog-training studies are embedded in institutions or advocacy cultures with clear philosophical preferences.

Precision matters in evidence reading the same way it matters in communication with dogs. A family that learns to separate task success, welfare cost, and long-term durability is much harder to mislead by broad marketing language.

Why Dog Training Is Hard to Study Cleanly

The field is hard to study for reasons that are not excuses so much as real constraints. Documented Blinding is nearly impossible because handlers know what method they are using. Behavior is context-sensitive and can look different at home, at a training school, and in a lab-like test. Dogs differ by age, breed, history, and temperament. Owners differ by competence, emotion, and consistency. Long-term follow-up is expensive and difficult. Ethical review boards are understandably reluctant to authorize strong aversive exposure in companion animals just to settle a methodological debate.

These constraints do not make evidence impossible. They do mean that overconfident claims should trigger suspicion. The stronger the trainer sounds, the more carefully the family should inspect what sort of study would actually be capable of supporting the claim being made.

Why It Matters for Your Dog

For a Golden Retriever family, evidence evaluation matters because your actual decisions are almost never about abstract philosophy. They are about whether to sign up for a puppy class, whether to hire a private trainer, whether to pay for a board-and-train, whether to keep proofing with treats, whether a correction tool is warranted, and how much confidence to put in a promised outcome. Those are evidence questions even when nobody uses the word evidence.

Imagine two trainers making competing claims about recall. One says a remote collar is the only reliable way to proof the behavior around major distraction. The other says reward-based recall is all any family dog should ever need. A research-literate family will ask several things immediately. Was the claimed superiority shown in a randomized or controlled study, or only in case videos? Were stress outcomes measured, or only performance? How large was the sample? How close was the test context to ordinary family life? Did the dogs maintain the behavior after the training condition ended? Those questions slow down false certainty.

The same literacy helps with softer claims. A positive-reinforcement trainer may say her method is evidence-based and humane. That is directionally supported. Yet if the specific plan assumes perfect daily adherence, multiple practice sessions, and impeccable timing in a busy household, the family still needs to ask about real-world compliance and transfer. The evidence does not only compare methods. It also warns about the gap between what protocols ask of owners and what owners actually do.

Consider a Golden who greets guests by launching bodily into the room. One study may show that reward-based differential reinforcement reduces jumping in a class setting. Documented Another may show that aversive handling raises stress markers. Neither study alone tells you how the dog will behave in a chaotic household with tired children, inconsistent adults, and guests who squeal and pet anyway. Evidence evaluation keeps the family from demanding from the literature a precision it does not possess.

This matters especially because pleasant breeds can create false reassurance. A Golden may tolerate a mediocre plan longer than a more reactive breed. The family then assumes the method is scientifically confirmed because the dog remains social and forgiving. What may actually be happening is that the dog's temperament is masking methodological weakness. Evidence literacy helps families see the role of breed and baseline temperament in apparent success.

It also protects families from costly overinvestment. Board-and-train sales pages, social media clips, and trainer testimonials are built on the lowest-evidence tier, even when the work itself is competent. That does not make them worthless. It does mean they should not outweigh better-quality comparative research or the known limitations of transfer and owner follow-through. A polished transformation video is not a substitute for long-term outcome data.

The deeper value is psychological. Once families understand how to read evidence, they do not have to swing between gullibility and nihilism. They can say, "This study matters, but it does not prove everything," or "This claim sounds confident, but the supporting evidence is only anecdotal." That posture is calmer and more adult, which is useful not only scientifically but relationally. It leads to better choices and less panic-driven method shopping.

JB wants families in that posture because it also disciplines JB's own voice. If the field has not directly tested a raised-versus-trained comparison over years, JB should say so. If attachment evidence supports the importance of relationship but not every practical conclusion JB would like to draw, that distinction should stay visible. Evidence evaluation is not something JB applies only to rivals.

This mindset also helps families resist the glamour of complicated explanations. Sometimes the better-supported answer is the boring one: the study was small, the owner report was subjective, the follow-up was short, and the trainer is promising too much. Documented Research literacy does not only help families appreciate good studies. It helps them notice when ordinary methodological weakness is being dressed up as certainty because the sales story around the study is emotionally satisfying.

Seen that way, evidence evaluation becomes part of everyday dog stewardship rather than a separate academic hobby. It helps a family notice when a confident claim is really built on a tiny sample, when a dramatic demonstration has no long follow-up behind it, and when a protocol may be asking more precision from the household than the household can actually deliver. Those are all practical judgment calls, not seminar-room abstractions.

What This Means for a JB Family

The first practical takeaway is to start every training claim with "What study design is behind that?" Heuristic A systematic review or controlled trial deserves more weight than a testimonial or a montage. A direct stress measure deserves more weight than a vibe. A six-month follow-up deserves more weight than a same-day demonstration.

The second takeaway is to separate categories of success. Ask about performance, welfare, durability, and owner sustainability separately. A plan that performs well but collapses when the family gets busy is not a strong family plan. A tool that produces immediate compliance while increasing stress is not automatically a good bargain. A method with beautiful moral language but impossible adherence demands is also not automatically a good bargain.

Third, use the literature to define ethical starting points rather than to eliminate judgment. The best-supported ethical starting point is to prefer lower-welfare-risk teaching strategies first. The best-supported caution is to distrust aversive-heavy promises of superior outcomes. Beyond that, a family still has to inspect context, trainer quality, household capacity, and what the dog is becoming over time.

Finally, keep the same discipline when reading JB. The knowledge base should model what it asks of others. Where the field is strong, JB can speak firmly. Where the field is thin, JB should make the uncertainty part of the content. That is not weakness. It is how a serious family avoids being sold confidence that the evidence has not earned.

One practical habit follows naturally from that. When a family hears a strong training claim, they can ask three quick questions before reacting emotionally: what kind of study is this based on, what exact outcome was measured, and how long did the result last? Those questions are short enough to use in real life and strong enough to expose a surprising amount of overstatement.

That habit also improves conversations inside the home. When adults disagree about what to do with a dog, evidence language can lower the temperature. Instead of arguing in slogans such as "he needs consequences" or "she just needs more treats," the family can return to measurable questions: what outcome are we trying to change, what route has the lowest known welfare cost, and what can we sustain consistently for months rather than for one emotional weekend.

Ask where a claim sits on the pyramid before you act on it.

Key Takeaways

- The quality of a dog-training claim depends heavily on study design, not just on whether a study exists.

- Families should ask what was measured, how long dogs were followed, and what biases could explain the result.

- Selection bias, owner-report bias, and weak long-term follow-up are structural problems across the field.

- Good evidence reading protects families from both anti-science cynicism and science-flavored marketing overreach.

The Evidence

This entry uses observed claim-level tags beyond the dedicated EvidenceBlocks below. These tags mark JB program observation or practice-derived claims that need dedicated EvidenceBlock coverage in a later content pass.

This entry uses heuristic claim-level tags beyond the dedicated EvidenceBlocks below. These tags mark JB interpretive application rather than direct study findings.

- companion dogs

Lays out the direction-of-effect problem, inconsistent method definitions, and rarity of strong randomized comparisons. - Lamb et al. (2018)companion dogs

Shows that professional assessment of success can diverge from validated behavioral outcome measures. - Powell et al. (2021)companion dogs

Demonstrates that owner characteristics influence treatment outcome independently of prescribed protocol. - Ziv (2017), Vieira de Castro (2020), Cooper (2014), and China (2020)companion dogs

Together illustrate how evidence weight rises when multiple designs converge on a similar welfare conclusion.

- domestic dogs

No published long-term study directly compares how dog training evidence is evaluated against structured relational raising and no-intervention controls across ordinary companion-dog homes.

SCR References

Sources

- Lamb, L., et al. (2018). Frontiers in Veterinary Science.

- Powell, L., Stefanovski, D., Siracusa, C., & Serpell, J. A. (2021). Frontiers in Veterinary Science, 7, 630931. https://doi.org/10.3389/fvets.2020.630931

- Ziv, G. (2017). Journal of Veterinary Behavior.

- Vieira de Castro, A. C., et al. (2020). PLOS ONE.

- Cooper, J. J., Cracknell, N., Hardiman, J., Wright, H., & Mills, D. (2014). The welfare consequences and efficacy of training pet dogs with remote electronic training collars in comparison to reward based training. PLOS ONE, 9(9), e102722. https://doi.org/10.1371/journal.pone.0102722